Crawl-Walk-Run Has Evaporated: SOC Leaders on Embedding AI Automation into Security Workflows

Hosted by Guardians of the Machine Age. April 28, 2026.

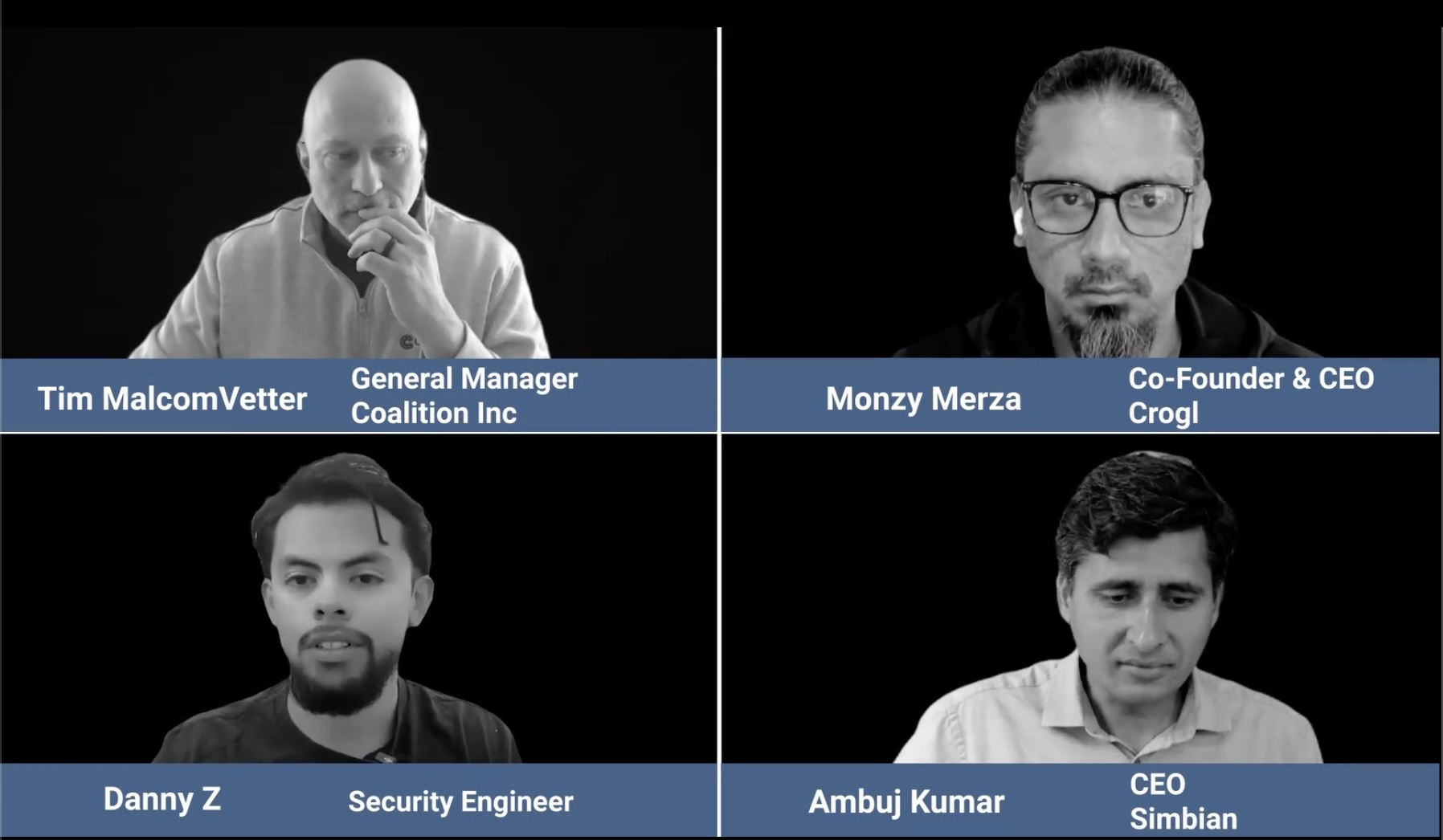

- Monzy Merza, Co-Founder & CEO, Crogl

- Tim MalcomVetter, General Manager, Coalition Inc

- Ambuj Kumar, CEO, Simbian

- Danny Z, Security Engineer, AI2

The panel was framed around a practical question: how do security teams actually get automation and AI into SOC workflows? What resulted was a session that challenged several things most security leaders treat as settled. The crawl-walk-run adoption model was declared insufficient. Mean time to detect was called a dead metric. And the long-standing argument for centralizing all security data got a public retraction from someone who spent years making that argument.

What follows is a distillation of the panel's core observations, prescriptions, and buying guidance. Attribution is noted throughout because panelists did not always agree on emphasis.

The Crawl-Walk-Run Window Has Closed

Monzy opened with a direct argument about the adoption model most security leaders have relied on. Crawl-walk-run "has been applied by a lot of security leaders," he said, and it made sense for a long time. It no longer does.

"The whole stack has been compressed," he said. "There's no more time." He pointed to a recent sophisticated campaign against the Mexican government as a concrete example of how quickly attack timelines now move. His conclusion: "the opportunity to do crawl, walk, run is, is it has evaporated."

The panel was careful here. Risk management does not disappear because the window is compressed. Another panelist said organizations still need bounded test environments: a department, a business unit, a newly acquired company transitioning to a new architecture. The prescription was to go all in on that bounded scope. What the panel rejected was treating adoption as a slow, linear progression across the whole organization.

The distinction matters. Parallelized transition within a defined perimeter is different from a leisurely crawl-walk-run across everything. Monzy's prescription: "security leaders and security practitioners really have to start thinking about what does the future state look like" and design toward that, not from where they are today.

Why MTTD Is No Longer the Right North-Star Metric

Tim made the sharpest argument on measurement. Mean time to detect (MTTD), he said, is largely outside a security team's control. In modern detection pipelines, the timing of upstream telemetry is determined by platform vendors. Security teams are optimizing a metric they cannot meaningfully influence.

The metric that actually matters is mean time to verdict (MTTV): the time it takes to decide whether an event is good or bad and whether action is required. That is something the SOC controls.

He was equally direct about MTTR. Mean time to respond and mean time to remediate are often used interchangeably by vendors and MSSPs but defined differently. "Critical alert" benchmarks can be misleading if you do not inspect how severity was assigned upstream. The problem is that low, medium, and high severity alerts can combine into a multi-stage attack. Filtering on pre-labeled criticals removes the ability to detect the full chain.

His prescription: look at everything, quickly, with a high level of automation. A cluster of events that looks unremarkable individually can be critical in totality. The goal is not a smaller queue. It is faster verdicts across a complete picture.

Fragmented Data Is the Real Operating Environment

One of the sharpest moments in the session came from Monzy on data architecture. He said he had spent years at Splunk and Databricks making the industry argument for putting all security data in one place. Then he said: "I was wrong."

Real customer environments are not clean centralized lakes. They are "three sims, six data lakes" spread across on-premises infrastructure, cloud environments, and storage platforms with incompatible schemas, different access methods, and no shared query language. "All of that has always been out the window" for operational organizations.

His argument was not that visibility is unimportant. It was that visibility cannot require normalization into a single system as a prerequisite. He said "people can give themselves permission that it's okay to have fragmentation" and design systems that can analyze data no matter its format, schema, footprint, or access method.

Tim added an economic argument. The cost of fragmented data is not primarily storage. It is the network movement and compute required to transform data before storing it. Organizations that architect around centralized consolidation pay that cost continuously. As response windows compress, unnecessary data movement becomes harder to justify.

The panel's preferred framing: treat the federated data lake as the starting condition, not a problem to be solved before AI can work. Build around it. Do not wait for it to go away.

Why AI Makes Reactive Security Operations Insufficient

One panelist argued this point directly: the reactive SOC model does not hold when AI-assisted attackers move at wire speed. Once an attacker is inside a network, the sequence from execution to lateral movement to exfiltration can happen too quickly for a purely reactive workflow to respond.

Monzy connected this to alert volume. The alert numbers security teams have been working with are effectively pre-AI numbers. The environment is changing in two directions simultaneously. Attacker capability is more broadly available. Monzy described intelligence as "sort of commoditized at this point." Another panelist expanded that attackers now have more know-how, can exploit known vulnerabilities, find new ones, and push phishing and URL-based attacks through defenses with very little domain expertise and few resources.

At the same time, AI is generating new categories of internal operational and compliance complexity: transitive trust problems, agents doing unsupervised activity, shadow AI, and people uploading work data into outside services to manage productivity pressure.

Monzy's framing on AI itself: "AI usage is now a common denominator." Defenders have it. Attackers have it. Vendors have it. MSSPs have it. "It is just table stakes." The panel's point was not that AI is useless. It was that AI alone is not a durable competitive advantage. How it is deployed, governed, and evaluated is what separates teams that are getting ahead of the threat from teams that are not.

The shift the panel described was from reactive to proactive: understanding what is happening across the environment before a threat actor completes their objective, not after.

Where AI Can Be Introduced into SOC Workflows First

The most concrete implementation guidance came from the adoption discussion. One panelist said teams should stop treating AI as something to compete with and start identifying where it makes sense to hand off specific workflow steps.

Three lower-risk entry points were named:

Alert enrichment using LLMs, especially when deterministic playbooks fail because the alert type is new or has not been encountered before. This is where probabilistic reasoning adds value that rule-based automation cannot.

Summarization and explanation of technical findings. Not replacing analyst judgment, but reducing the time to understand and communicate what happened.

Earlier involvement in detection engineering, using knowledge of important assets and how they have been targeted before to inform what coverage gaps to close.

Monzy drew a line between the tactical and the foundational: "the use case stuff is going to be easy." The harder problems are architectural and are where most AI SOC products are under-evaluated.

What to Evaluate in AI SOC Products Beyond Hallucinations

Monzy made one of the sharpest buying-criteria arguments of the session. Buyers spend too much attention on hallucinations. The foundational problems get too little.

His list of the real evaluation criteria:

- Authentication and authorization: how the product handles identity and access within the system

- Secrets management: "the thing that's hard is how you build a secrets management system"

- Session management and session timeouts: whether the product handles these correctly by design

- User and privilege partitioning: whether data and actions are properly scoped per analyst and role

- Operating model: whether the product is SaaS, customer-managed, or self-managed, and what that means for data exposure and sovereignty

Then he went further. Even after you address secrets management: "that is not even the hardest problem here." The harder problem is context reconciliation. Large organizations have conflicting information across multiple systems. Speed alone does not solve what is happening. The system needs to maintain the most precise understanding possible given all available knowledge, and that context should not degrade over time.

One panelist made a parallel argument against the default approach of collecting everything. The prescription was to back-solve: decide what data is needed based on the outcomes the team needs to achieve, not collect everything, log it, and pay for it at scale.

Outcomes, Consistency, and Transparency

Tim closed with a framing that grounded the whole discussion. The goal is not to claim AI features. The goal is to stop threat actors. Quickly. Repeatably. Consistently. Transparently. With logged decision-making that can be reviewed.

The implicit critique across the session was that too many teams measure symptoms and siloed metrics instead of what is happening at the system level. MTTD is a symptom metric. Alert volume is a symptom metric. The system-level question is whether the team can produce reliable security outcomes when the next phase of attacker behavior arrives.

Speed matters. Context matters. Architectural rigor matters. The panel's argument was that none of them are sufficient alone.

This post distills the panel discussion at Guardians of the Machine Age, April 28, 2026. It reflects what was said in the session and does not represent the independent views of any organization.

Frequently asked questions

Is the crawl-walk-run model still valid for AI adoption in the SOC?

The panel argued it is no longer sufficient. Attack timelines have compressed to the point where gradual, phased adoption across an entire organization cannot keep pace with the threat. The panel's recommendation was not to abandon risk management, but to go all in within a bounded scope. A department, a business unit, or a newly acquired company can serve as the test environment. The distinction the panelists drew was between parallelized transition within a defined perimeter versus a slow linear progression across everything.

Why is mean time to detect (MTTD) considered a weak metric?

Tim argued that MTTD is largely outside a security team's control. The timing of upstream telemetry is determined by the platform vendors providing the detection signals, not by the SOC. Optimizing MTTD means optimizing something the team cannot meaningfully influence. The metric he proposed as more useful is mean time to verdict (MTTV): the time it takes to decide whether an event is good or bad and whether action is required. That is a decision the SOC controls.

Why shouldn't security teams just filter out low and medium alerts to reduce noise?

Because the relevant signal in a multi-stage attack may only emerge when multiple lower-severity events are viewed together. Tim argued that filtering alerts based on pre-labeled severity removes the ability to detect the full chain of a sophisticated campaign. Low, medium, and high alerts can be critical in totality even when none triggers a standalone escalation. Looking only at pre-labeled criticals assumes you trust the upstream severity assignment, which was presented as an unsafe assumption.

Should organizations consolidate all security data into one place before deploying AI?

The panel challenged this directly. Monzy said he had spent years arguing for centralized data consolidation and called that argument wrong. Real enterprise environments are already fragmented across multiple SIMs, data lakes, on-premises systems, and cloud platforms with incompatible schemas. The panel's prescription was to accept fragmentation as the starting condition and build systems that can analyze data regardless of format, schema, footprint, or access method. Tim added that forcing consolidation creates ongoing network movement and compute costs that compound as response windows shrink.

Is AI still a meaningful competitive advantage for security teams?

Not by itself, according to the panel. Monzy framed AI as a baseline: "AI usage is now a common denominator." Defenders have it, attackers have it, vendors have it, and MSSPs have it. The panel's argument was that AI is now table stakes. How it is deployed, how it is governed, and how well the underlying architecture supports it is what creates operational differentiation. A team with AI and poor context reconciliation is not better positioned than a team without AI that has strong system-level understanding.

What should security teams evaluate in AI SOC tools beyond hallucinations?

Monzy named a specific list: authentication and authorization, secrets management, session management and session timeouts, user and privilege partitioning, and whether the operating model is SaaS, customer-managed, or self-managed. He argued that buyers focus too much attention on hallucinations and too little on whether the AI product is secure in itself and whether it supports secure operator use. He went further: even a product that handles secrets management correctly may still fall short on context reconciliation, which he called the harder problem.

Should security teams collect as much data as possible to improve visibility?

One panelist argued against the collect-everything model. His prescription was to back-solve from outcomes: decide what data is needed to achieve specific investigative goals, and collect based on that. Collecting everything, logging it, and paying for it at scale was presented as a default that does not hold up to principled evaluation. The panel's framing of visibility was about the ability to access and reason across data when needed, not about volume or centralization.